Debt-to-Income Ratio Calculator

Calculate Your Loan Eligibility

Your Debt-to-Income Ratio

Your ratio is 0%

Based on industry standards, you should aim for 43% or below for most loan approvals.

As explained in the article, the 43% debt-to-income ratio is a critical threshold for loan approval. Automated underwriting systems use this calculation to determine your eligibility. If your ratio exceeds 43%, you may face challenges with loan approval.

What Automated Underwriting Actually Does

Automated underwriting isn’t magic. It’s software that looks at your financial data-credit score, income, debts, assets-and decides in minutes whether you qualify for a loan. No human reviews your W-2s. No one calls your employer. Instead, a system powered by artificial intelligence runs your numbers against thousands of past loans and predicts your risk level. The result? A decision in 15 minutes instead of two weeks.

Back in the 1990s, Fannie Mae and Freddie Mac built the first versions of this tech. Their systems, Desktop Underwriter and Loan Prospector, were the start of something big. Today, automated underwriting handles 87% of all U.S. mortgage applications, according to the Consumer Financial Protection Bureau. That’s not a niche tool anymore-it’s the backbone of modern lending.

How It Works: The Four Parts Behind the Scenes

There are four moving pieces in every automated underwriting system:

- Data Input: The system pulls your credit report from Experian, Equifax, or TransUnion. It checks your income through payroll services like ADP. It verifies tax records using IRS e-Verify. Everything is pulled in automatically.

- Rules-Based Logic: Lenders set rules like “Debt-to-income ratio can’t exceed 43%” or “Credit score must be above 620.” The system checks your numbers against those rules.

- AI Risk Models: This is where it gets smarter. The AI doesn’t just follow rules-it learns from past loans. If people with your income, credit history, and down payment amount paid back their loans 92% of the time, the system flags you as low risk. It’s not guessing. It’s calculating based on real outcomes.

- Real-Time Decision: Within 2 to 15 minutes, you get a response: approved, denied, or flagged for manual review. About 78% of applications get an instant answer, according to LoanPro’s 2022 data.

It’s fast. It’s consistent. And it’s way more accurate than humans.

Why It’s Better Than Human Underwriters

Human underwriters are good-but they’re slow and inconsistent. A 2022 study by Blooma.ai found that automated systems reduce human error by 31%. Why? Because people interpret rules differently. LoanPro found that when two underwriters looked at the exact same application, they made different decisions 17% of the time.

Automated systems don’t get tired. They don’t have bad days. They apply the same rules to everyone. And they’re getting smarter. In 2018, systems used about 83 data points. Today, they use 278. That means they can spot subtle patterns-like how often someone pays rent on time, even if they don’t have a credit card.

Dr. Susan Woodward, former Fannie Mae economist, says automated underwriting cut the cost of originating a conventional mortgage by $350 per loan. That’s money saved for lenders-and passed on to borrowers in lower rates.

Where It Still Falls Short

Automated underwriting isn’t perfect. About 22% of applications still need a human to step in. Why? Because life isn’t always clean.

If you’re self-employed, your income isn’t on a W-2. It’s messy. Spikes and dips. Invoices, bonuses, seasonal work. Most systems can’t handle that well. That’s why 63% of cases that get kicked to manual review involve self-employed borrowers, according to Ellie Mae’s 2023 report.

Same with non-traditional credit. If you’ve never had a credit card but pay your rent and utilities on time, many systems won’t recognize that. Fannie Mae’s 2023 update added rental payment history as a data point-but not all lenders use it yet.

And sometimes, you get a denial with no real explanation. Just a code. A Reddit user wrote in September 2023: “My application was marked ineligible with no specific reason-just a generic code my loan officer couldn’t explain.” That’s the black box problem. You don’t know why you were denied. And under federal law, lenders have to tell you. But if the system says “risk score too low,” that’s not helpful.

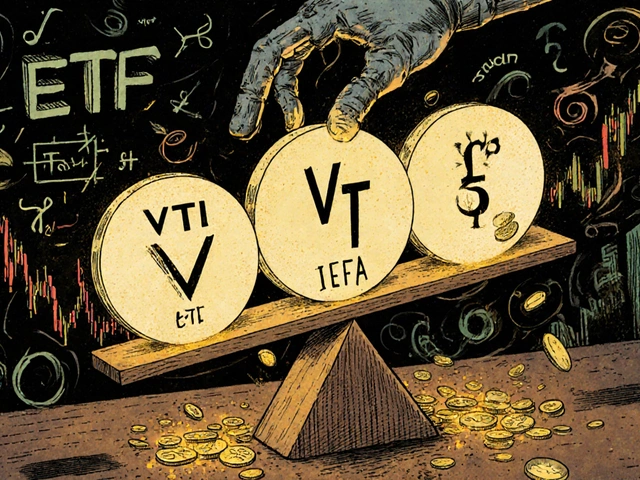

Who’s Leading the Market

Fannie Mae’s Desktop Underwriter still runs the show. It handles 48% of all mortgage underwriting in the U.S. Freddie Mac’s Loan Prospector is second at 35%. Together, they control 83% of the market.

But new players are catching up. Inscribe AI, Arya.ai, and Ellie Mae’s Encompass AI are growing fast. Inscribe alone grew 37% year-over-year in 2022-2023, according to HousingWire. These platforms are more flexible. They integrate with more banking systems-over 150, according to Biz2x. They’re also building better explainability features to answer the “why?” question.

And it’s not just mortgages. Auto lenders are using automated underwriting too. 68% of them now use some form of AI decisioning, according to the Consumer Bankers Association. Personal loans? 52%. This tech is spreading.

What You Need to Know If You’re Applying

If you’re applying for a loan right now, here’s what matters:

- Get your credit report in order. Check for errors. Pay down debt. Aim for a FICO above 680. The higher, the better.

- Keep your income steady. If you’re self-employed, make sure your tax returns show consistent income over two years. Lenders want to see stability.

- Have documents ready. Pay stubs, bank statements, W-2s. Even if the system pulls data automatically, having backups helps if it flags your file.

- Ask for clarity. If you’re denied, ask for the specific reason. Under the Equal Credit Opportunity Act, lenders must provide it. If they say “risk score too low,” push back. Ask what factors hurt your score.

And don’t assume a denial is final. If your application gets flagged for manual review, that’s not a rejection. It’s a second chance. A human underwriter can look at your full story-your rental history, your side gigs, your savings. That’s where automation ends and judgment begins.

The Future: What’s Coming Next

By 2027, McKinsey predicts 95% of prime mortgage applications will get an automated decision. That’s almost all of them.

Here’s what’s next:

- Open banking integration: Lenders will connect directly to your bank accounts (with your permission) to see real-time cash flow-not just snapshots from statements.

- Real-time income verification: Payroll APIs will confirm your paycheck as it hits your account. No more waiting for pay stubs.

- Explainable AI: New systems will show you exactly why you were approved or denied-like a scorecard: “Your debt-to-income ratio was 45%, which is above our 43% limit.”

Regulators are pushing for this. The CFPB and FDIC now require lenders to validate their AI models. They don’t want hidden bias. They want transparency.

Final Thought: It’s Not Replacing People-It’s Helping Them

Automated underwriting doesn’t eliminate human judgment. It frees it up. Instead of spending hours checking numbers, underwriters now focus on complex cases-self-employed borrowers, unique asset structures, non-QM loans. The boring, repetitive work is done by machines. The hard, nuanced decisions are left to people.

That’s the real win. Faster approvals for the majority. Better service for the rest. And more fairness, if the systems are built right.

Comments

Kenny McMiller

November 26, 2025Automated underwriting is basically the neoliberal dream made code: remove human subjectivity, optimize for efficiency, and call it fairness. But let’s be real-when your credit score is the only language the system speaks, what happens to the guy who pays cash for everything because he distrusts banks? Or the immigrant building credit through rent? The algorithm doesn’t see context. It sees data points. And data points don’t have trauma, resilience, or late-night hustle. We’re outsourcing moral judgment to a model trained on past biases disguised as ‘patterns.’

It’s not that AI is wrong-it’s that it’s *amoral*. It doesn’t care if you got laid off because your factory closed. It just sees a dip in income and flags you as ‘high risk.’ That’s not smart underwriting. That’s statistical discrimination wrapped in a sleek UI.

Dave McPherson

November 28, 2025LMAO this whole ‘AI is fairer’ narrative is peak Silicon Valley delusion. You think a machine that reads your bank statements like a Yelp review is gonna understand that your ‘spike’ was your mom’s cancer fund? Nah. The system’s got 278 data points but zero soul.

And don’t even get me started on ‘explainable AI.’ You think some bot is gonna say, ‘Sorry, Dave, your credit score sucks because you once bought a $12 burrito on a 3am bender and your bank flagged it as ‘unusual spending’?’ No. It just says ‘risk score too low’ like a robot bartender who won’t refill your drink because you wore socks with sandals.

Meanwhile, Fannie Mae’s still running on 2008-era code with a fresh coat of ML paint. It’s not innovation. It’s institutional inertia with a TED Talk.

Julia Czinna

November 30, 2025I appreciate how this breaks down the mechanics, but I’ve seen firsthand how these systems can crush people who don’t fit the mold. My cousin, a freelance artist, got denied a mortgage because her income varied month to month-even though she had steady clients and savings. The system didn’t see ‘creative stability,’ it saw ‘inconsistent cash flow.’

It’s not the tech’s fault, really. It’s the design. If we’re going to rely on automation, we need to build in flexibility for non-traditional paths. Not just ‘add rental history,’ but ‘understand gig economy rhythms.’ Otherwise, we’re just automating exclusion.

And honestly? The fact that 22% still go to manual review proves humans still matter. Maybe the goal isn’t to replace them, but to elevate them-to let them focus on the stories behind the numbers.

Laura W

December 1, 2025YESSSS. This is the future and I’m here for it. My cousin got a personal loan in 9 minutes last week-no calls, no forms, just a quick sync with her bank. She was crying happy tears. That’s the power of this tech when it works right.

And yeah, the black box sucks, but companies like Inscribe are fixing it with real-time scorecards. Imagine getting a breakdown like: ‘Your rent history improved your score by 12 points!’ That’s revolutionary for people who’ve been locked out of credit for years.

Also-auto lenders using this? Yes. My brother got a car loan with zero paperwork. He’s a mechanic who pays cash for everything. The AI saw his PayPal income from side gigs and approved him. That’s equity. That’s inclusion. Stop hating on the machine. It’s just doing what humans were too slow or biased to do.

Also, FICO 680+? That’s the new 700. Get your numbers in order, fam. The system’s not out to get you-it’s out to help you if you play by the new rules.

RAHUL KUSHWAHA

December 2, 2025Thank you for this clear explanation. I am from India and we are just starting to adopt such systems. Your point about rental history being new data point is very important. Many people here do not have credit cards but pay rent on time. This can change lives.

I hope regulators here will push for transparency like CFPB. It is not about replacing humans, but helping them to focus on what matters. 🙏

Write a comment