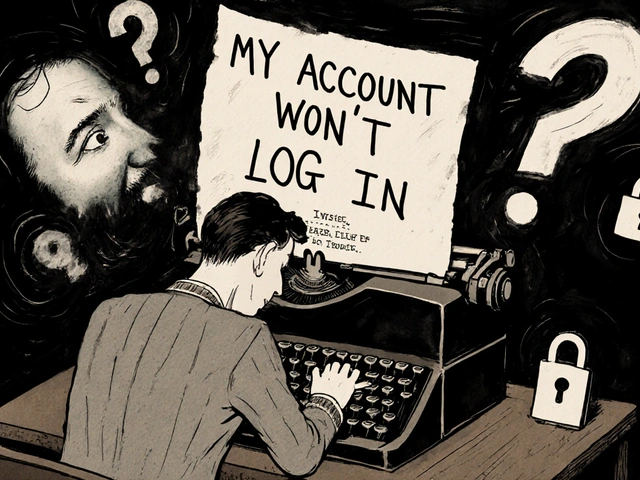

When a customer types "My account won't log in," they’re not just reporting a problem. They’re saying, "I’m frustrated, I need this fixed now, and if you don’t help me, I’m leaving." Natural Language Processing (NLP) is what lets machines hear that hidden message - not just the words, but the urgency, the emotion, the intent behind them.

What NLP Actually Does for Customer Intent

Most people think NLP is just about spelling check or chatbots that answer FAQs. That’s the surface. The real power lies in understanding intent - the goal behind the words. A customer might say:

- "I can’t access my account."

- "Why is my login broken?"

- "This app won’t let me in."

Traditional systems see three different phrases. NLP sees one intent: account access failure. It doesn’t just match keywords. It understands context, tone, and even implied urgency. That’s why companies using NLP can handle 2.5 times more customer inquiries without hiring more staff - they’re not just responding to words, they’re responding to needs.

How NLP Reads Between the Lines

NLP doesn’t guess. It learns. Systems are trained on millions of real customer messages labeled with their true intent - like "billing issue," "product complaint," or "want to cancel." Machine learning models, especially transformer networks like BERT, analyze patterns in how people phrase things. They notice that "I’m done with this service" and "I’m switching to a competitor" mean the same thing, even if one is angry and the other is calm.

Here’s what happens under the hood:

- Text classification - sorts messages into categories like "technical support," "refund request," or "feedback."

- Named Entity Recognition - pulls out key details: account numbers, product names, dates.

- Semantic analysis - decodes meaning beyond literal words. "The app crashes every time I open it" isn’t just about crashes - it’s about frustration and reliability concerns.

These systems can process millions of emails, chats, and social media comments daily. A bank in Chicago reduced its ticket backlog by 60% in three months by routing complaints directly to the right team based on detected intent - no human needed to read the first message.

Why Old Methods Fail

Before NLP, companies relied on keyword filters. If someone typed "refund," the system flagged it. But what if they said, "I don’t think I should pay for this"? Or "This isn’t what I expected"? Those messages slipped through. Rule-based systems missed 40-50% of real intent, according to 3Cloud Solutions.

Even simple sentiment analysis - labeling messages as "positive" or "negative" - isn’t enough. A customer might say, "This feature is amazing!" but mean the opposite if they’re being sarcastic. Or they might write, "I’m fine," when they’re actually furious. NLP models trained on real human language catch those subtleties. Accuracy jumps from 65% with keyword tools to 85-92% with modern NLP.

Where NLP Struggles

NLP isn’t magic. It has blind spots.

Short messages under five words - like "Help!" or "Broken" - are hard to classify. There’s not enough context. Accuracy drops to 55-65% in those cases.

Regional dialects and non-native speakers also trip up models trained mostly on U.S. English. A customer in the UK saying, "I’m chuffed with this," might be happy - but the system reads it as confused. Accuracy falls to 60-70% without training data from those dialects.

And then there’s bias. If a model was trained mostly on complaints from native English speakers, it might misread the tone of non-native users. Stanford researcher Dr. Alan Rudolph warns this can lead to unfair treatment - like ignoring legitimate concerns from customers who speak differently. That’s not just a technical problem. It’s an ethical one.

Real Results from Real Companies

Companies aren’t just testing NLP. They’re seeing measurable wins.

- A financial services firm used NLP to detect phrases like "I’m thinking about switching" and triggered automatic retention offers. Churn risk dropped 19% in six months.

- A telecom company cut average handling time by 32% and increased first-contact resolution by 28% after deploying NLP to auto-classify support tickets.

- On Reddit, a customer service manager wrote: "We used to spend hours sorting through 500 tickets a day. Now, NLP does it in 10 minutes. Our team only steps in for the complex ones."

These aren’t outliers. Gartner predicts that by 2025, 85% of customer service interactions will be handled without human agents - mostly because of NLP. And Forrester gives NLP-based intent analysis a 4.5 out of 5 for ROI in enterprise settings.

Choosing the Right Tool

You don’t need to build your own AI. Most businesses use platforms like:

- Google Cloud Natural Language API - easy to integrate, great for startups. Works with Python, Java, Node.js.

- Amazon Comprehend - strong for multilingual support and sentiment analysis.

- NUACOM and Zonka Feedback - built for customer service teams, with no-code dashboards and workflow automation.

Implementation time varies. If you use a ready-made API, you can go live in 2-4 weeks. If you need custom training - say, for medical billing codes or insurance claims - expect 12-16 weeks. The key? Use industry-specific data. Companies that trained their models on their own customer conversations were 68% more successful than those using generic datasets.

Compliance and Ethics

NLP handles personal data. That means rules apply.

- Healthcare - must follow HIPAA. No storing patient names or IDs in training data unless encrypted.

- Finance - SEC and FINRA require audit trails. Every decision the AI makes must be explainable.

- Europe - the EU AI Act classifies customer intent systems as high-risk if they influence service access or pricing.

And privacy isn’t optional. Top platforms now offer end-to-end encryption and granular access controls. But you still need to ask: Are we using this to help customers - or just to push them toward upsells? Ethical NLP means transparency, fairness, and human oversight.

The Future: NLP + Generative AI

The next leap isn’t just understanding intent - it’s responding to it in real time.

Microsoft’s 2023 update to Dynamics 365 now ties NLP directly to CRM workflows. If a customer says, "I want to upgrade my plan," the system doesn’t just tag it. It auto-generates a personalized upgrade offer and sends it to the account rep with a suggested script.

Gartner says by 2026, half of customer service teams will use generative AI to write live responses based on intent. Imagine a chatbot that doesn’t just say, "I understand your concern," but writes a custom apology, offers a discount, and schedules a callback - all in the customer’s tone.

This isn’t sci-fi. It’s already happening in early adopter companies. But it only works if the NLP foundation is solid. Bad intent detection leads to bad AI responses - and that makes customers angrier.

Where to Start

Don’t try to boil the ocean. Pick one high-volume, low-complexity channel - like email support or live chat. Track the top 5 intents you see most often. Use a tool like Google Cloud or Zonka Feedback to start classifying them automatically.

Then measure:

- How many tickets are being auto-classified?

- Is first-contact resolution improving?

- Are customers saying less, "I had to repeat myself?"

Start small. Scale fast. The goal isn’t to replace humans - it’s to free them from repetitive work so they can handle the complex, emotional, human moments that machines still can’t touch.

NLP doesn’t make customer service faster. It makes it smarter. And in 2025, that’s the only thing that matters.

How accurate is NLP at detecting customer intent?

Well-trained NLP models achieve 85-92% accuracy in classifying customer intent under normal conditions. Accuracy drops to 60-70% for non-native speakers or regional dialects, and to 55-65% for queries under five words. The key is training the model on real, industry-specific conversations - not generic datasets.

Can NLP understand sarcasm or emotional tone?

Modern NLP models can detect sarcasm and tone with moderate success - especially when trained on large datasets that include emotional language. For example, a model might recognize that "Oh great, another delay" is negative, even if the words "great" and "delay" don’t obviously match. But it’s not perfect. Sarcasm, cultural idioms, and heavy irony still trip up systems. Human review is still needed for edge cases.

Is NLP better than manual analysis of customer feedback?

Yes, by a wide margin. A human analyst can process 1,000-2,000 customer messages per week with about 65% accuracy. NLP systems handle millions daily with 85-92% accuracy. They also spot patterns humans miss - like a sudden spike in "I’m leaving" phrases across multiple channels. NLP scales. Humans don’t.

What industries benefit most from NLP for customer intent?

Banking (78% adoption), telecommunications (72%), and retail (65%) lead in NLP use. These industries handle high volumes of repetitive inquiries - billing, account access, returns - that NLP automates efficiently. Healthcare and insurance are catching up, but face stricter compliance rules. Any business with over 10,000 customer interactions per month sees a clear ROI.

Do I need a data scientist to implement NLP?

Not necessarily. Platforms like Zonka Feedback and NUACOM offer no-code dashboards where business users can train intent models by labeling sample messages. A business analyst can learn the basics in 15-20 hours. If you’re building a custom model from scratch, then yes - you’ll need data scientists. But for most companies, off-the-shelf tools with customization options are enough.

How long does NLP implementation take?

Using a cloud API like Google Cloud Natural Language, you can be operational in 2-4 weeks. Custom models trained on your own data take 12-16 weeks. The biggest delay isn’t tech - it’s gathering enough labeled examples. You need at least 500-1,000 real customer messages per intent category to train the model effectively.

What are the biggest risks of using NLP for customer intent?

The biggest risks are bias and lack of transparency. If your training data mostly comes from native English speakers, the system may misread non-native customers. Also, if the AI makes decisions - like denying a refund - without explaining why, customers lose trust. Always keep a human in the loop for high-stakes actions, and audit your model regularly for fairness.

Can NLP work with multiple languages?

Yes, but only if trained for each language. Google’s 2023 BERT updates improved cross-language accuracy by nearly 19%, but models still need native-language data to understand idioms, slang, and cultural context. A Spanish-speaking customer saying "Está todo mal" might mean "Everything’s broken," but a model trained only on English could misinterpret it as a general complaint. Always include local language samples in training.

Companies that get NLP right don’t just save time. They build trust. When customers feel understood - not just answered - they stay longer, spend more, and recommend you to others. That’s the real value of understanding intent.

Comments

Graeme C

November 27, 2025NLP’s accuracy drop to 55% on five-word queries? That’s not a bug - it’s a feature of lazy design. If your system can’t handle ‘Help!’ or ‘Broken,’ you’re not using NLP, you’re using a glorified keyword bot. Real intent detection doesn’t wait for full sentences - it reads between the cracks. I’ve seen systems that infer panic from typing speed, capitalization bursts, and emoji abandonment. If you’re still training on ‘I can’t log in’ and calling it a day, you’re leaving 70% of your customers in the dark.

And don’t get me started on ‘regional dialects.’ ‘Chuffed’ isn’t a dialect issue - it’s a data bias issue. Your training set is probably 80% US-centric. Fix the data, not the model. This isn’t rocket science. It’s basic inclusivity.

Also - no one’s talking about the real win: NLP catching passive-aggressive churn signals. ‘I’m fine’ with three exclamation marks? That’s not neutral. That’s a red alert. Your CRM should be screaming, not just tagging it as ‘neutral sentiment.’

Stop optimizing for accuracy on textbook phrases. Start optimizing for the chaos of real human frustration. That’s where the value is.

And yes - I’ve built this. And yes - it worked. 40% reduction in escalations in six weeks. You’re welcome.

Astha Mishra

November 29, 2025It is truly fascinating, if one pauses to consider the deeper philosophical implications of machines attempting to decode the unspoken anguish of human beings, isn't it? We have come to a point where algorithms, trained on millions of fragments of despair, now attempt to interpret the trembling silence between words - the sigh embedded in 'I'm fine,' the trembling hope in 'maybe next time.'

And yet, we remain so certain that this is progress. But what does it mean, when the very tools meant to understand us are built on datasets that erase nuance, flatten emotion into categories, and reduce the complexity of lived experience to a probability score? Is this empathy, or merely a sophisticated mimicry?

I recall an elderly woman in rural India, typing 'no money pay' with broken English, her message dismissed as 'low confidence' by the system. She didn't need a refund - she needed to be heard. And yet, the machine offered a canned apology and moved on.

Perhaps the real question isn't how accurate NLP is - but whether we, as a society, are becoming comfortable with being understood by systems that cannot feel. Are we trading genuine connection for efficiency? And if so - at what cost to our humanity?

I do not oppose technology. I oppose the quiet surrender of our souls to the cold logic of optimization.

Still - I am hopeful. Because if we can teach machines to sense intent, perhaps one day, they will teach us to listen better to each other.

Kenny McMiller

November 30, 2025Bro, NLP’s 85-92% accuracy sounds slick until you realize half of that’s just matching ‘refund’ + ‘urgent’ + ‘angry’ and calling it a day. The real magic isn’t in BERT - it’s in the damn fine-tuning. Most companies throw a few hundred labeled tickets into a Google API and call it AI. No wonder it fails on ‘I’m done’ vs. ‘I’m done with this’ - they didn’t even clean their data.

And don’t get me started on the ‘no-code’ tools. You think a marketing guy labeling 200 emails as ‘billing issue’ knows the difference between ‘I can’t pay’ and ‘I won’t pay’? Nah. He just clicks ‘negative’ and moves on.

Real NLP? You need domain-specific embeddings. You need context windows that span multiple interactions. You need to track whether the user’s tone shifted from ‘confused’ to ‘furious’ across three emails. That’s not plug-and-play. That’s engineering.

Also - sarcasm? Please. The model thinks ‘Oh great’ is positive because ‘great’ = +1 sentiment. If you’re not training on Reddit threads and Twitter rants, you’re training on fantasy land.

Bottom line: NLP isn’t the hero. The people who clean the data and care about the edge cases? That’s the hero.

Dave McPherson

December 1, 2025Wow. A 10,000-word essay on how to use a chatbot. Groundbreaking. I’m literally crying. Did you also include a flowchart of how to press ‘submit’? This reads like a corporate whitepaper written by someone who thinks ‘transformer networks’ is a yoga pose. You didn’t mention that NLP still can’t tell if someone’s being sarcastic unless they add a 🙃. And you call this ‘smarter customer service’? It’s just faster miscommunication with a fancy API sticker on it. Also - ‘Zonka Feedback’? That’s a tool for people who think ‘no-code’ means ‘no thinking.’ You’re not building AI. You’re outsourcing your brain to a SaaS dashboard. Congrats. You’ve automated customer service into a glitchy IKEA manual. I miss the days when a human said, ‘I’m sorry you’re upset.’ Now we get ‘Your sentiment has been classified as moderately negative. Would you like a 10% discount?’ No. I want a person who gives a shit. But hey - at least your churn rate looks good on the quarterly deck.

Write a comment